In the recent World quality report, 61% of respondents claimed that their biggest barrier to success with automated UI testing was frequency of application change. Logically the push towards Continuous Integration and DevOps only accentuates this challenge further. If we were to re-phrase “Application change” as “Script Maintenance” it’s clear that this is a challenge that isn’t new.

Essentially, test automation is brittle. This has been its Achilles heel for decades. Changes to the User Interface of the application under test, however small, (and often invisible to the end user) will inevitably break traditional test automation scripts necessitating constant maintenance. Let us delve, briefly, into why.

Nearly all automation tools from legacy desktop tools to cutting edge Open Source Mobile and Web tools rely on one fundamental principle; How does a script author communicate intended target UI elements. i.e. How do I articulate which button to click or what field to enter a value into?

The Manual Test Script and execution paradigm is more robust. A simple natural language intent is easily interpreted into direct action against the application under test. E.g.

“Check the value of the Cart item count is 1”

As a user or manual tester, I would bet your mental image is inadvertently picturing the top-right of a web page, looking for a shopping cart image with a number nearby?

I’m guessing, something along these lines:

As humans we are equipped with a number of things traditional automation products do not have, natural language processing ability, contextual-awareness and the ability to call upon previous experience.

For the manual test step mentioned above, let’s look at equivalent statements of code for Selenium (in no particular language or test automation framework):

actual = driver.findElement(By .xpath(“/html/body/div/div[2]/div[1]/div[2]/a/span”)) .getText(); Assert.Equal(”1”,actual);

The Automated script author has had to articulate the target element in technical terms, usually through a process of Inspection and analysis. Finding the id, xPath, CSS or other technical “Selector” term using their contextual knowledge of what constitutes the “Cart Item Count” and their skills in creating a robust id, xPath or CSS reference.

So back up a step, why does this matter? Well any refactoring of the UI, say through inserting a new Breadcrumb view, menu item, or changing a supporting UI component library, usually breaks these references. This is particularly problematic when positional or hierarchical xPath and CSS selectors are used that rely on position in the website hierarchy (DOM).

When the selector references break they need to be updated. This is traditionally through a process of re-inspecting and updating the code, central selector library or model.

So: UI changes are bad for automation, Application change is problematic. Maintenance overhead increases proportionally. Hence the 61% facing that challenge.

The application of AI and ML technology is being seen everywhere. Availability of ML frameworks, computing power and with the backing of big vendors; Google, IBM and Microsoft, we have seen a sharp rise in vendors adopting AI and ML in their solution sets. AI has the potential to revolutionize the way we write automated tests and make them more resilient to application changes. Let’s explore how.

Let’s look at the problem of target intent in another way, how do we as a user or manual tester interpret a given step? Taking our step:

“Check the value of the Cart item count is 1”

First thing we do, is we hone in on the target description, “Cart item count”, if we are not familiar with the application we look around for something that seems to fit the bill. We subconsciously look for different characteristics and ask mental questions;

We take visual and descriptive clues from the application and use our experience and contextual knowledge to match it to the intended target.

We are mentally using the classic Duck Test:

“If it looks like a duck, swims like a duck and sounds like a duck, it’s probably a duck”.

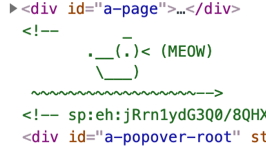

(As an aside, a mysterious nod to the duck test can be found buried in the DOM on Amazon’s homepage, their meowing duck):

Generally, however: the more we have to use contextual knowledge or the more questions we internally ask, the harder the UI is to use. Steve Krug’s famous book, “Don’t make me Think” discusses the concept.

As a consequence of this modern applications do a pretty good job of being self-describing, either for accessibility purposes with the Web Accessibility Initiative (WAI) or to aid in plain old usability. Rarely will you find unlabeled fields, or images without alt descriptions.

(What you do find however are meowing ducks! Span’s acting like buttons etc.).

How can we harness the increasingly self-describing application landscape to make automated test creation and maintenance easier and more robust in countering application change?

Particular types of AI/ML Models work in a similar way to humans in processing characteristics, weighing up their importance and providing the likelihood of a match based on previous examples. A kind of black box duck test.

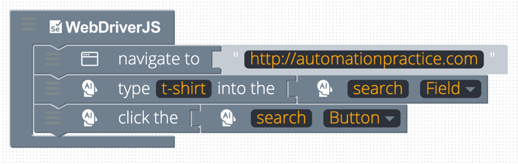

Ideally, we should be able to write our test in a form as close as possible to natural language and let the AI figure out what we are talking about:

“Check the value of the Cart item count is 1”

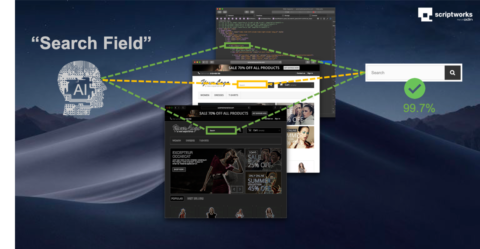

We are introducing a revolutionary Selector-Free Element Identification technology into the Scriptworks visual scripting interface for Selenium and Appium.

Using a semantic description and an element type, our selector technology analyses the elements of an application, looking at visible and non-visible characteristics and surrounding features. An AI model scores these resulting in a closest match.

Semantic descriptions can be tested against an Application:

Coupled with our simple to use visual test authoring, test steps can be defined in a form much closer to natural language. No need for learning or maintaining vast selector libraries:

It is easy to find scenarios where a simple semantic description would result in multiple matches in more complex UIs, for example a list of product items resulting from a search in an ecommerce site. There are multiple Add to cart buttons:

In this scenario we can ask the smart selector technology to first match an outer container and then focus subsequent searches within the confines of the container. The equivalent of a manual test step like:

“Click the Add To Cart button in the Blouse Product Card”

First the container is identified:

And the use of the “in the” form allows us to chain our searches. A test step in the visual test authoring interface would look like this:

The model can be customized to accommodate all kinds of container structures, search results, product lists, page sub-sections, regardless of sort order etc.

To see our AI in action we have switched on the highlighting feature during execution to show the identified matches during an E2E test with no traditional selectors used:

Coupled with our execution and results framework built for concurrency, cross-platform concurrently executing tests can be built quickly eliminating maintenance headaches. Run from CI/CD tools and on Cloud infrastructure providers.

Most importantly, Resilient to Application change.